Research

XR Access supports research of all types that are dedicated to either making mainstream XR applications more accessible or utilizing XR as an assistive technology.

At Cornell Tech, Professor Shiri Azenkot’s Enhancing Ability Lab is committed to developing technology that empowers people with disabilities. Prof. Azenkot and her students have been developing XR applications to help people with visual impairments with daily tasks, like navigating stairs and locating products in a supermarket.

If you’re researching XR accessibility yourself, consider joining our research network for updates on current research, funding opportunities, participant recruitment, and more!

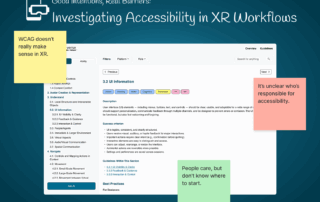

Good Intentions, Real Barriers: Investigating Accessibility in XR Workflows

Continue Reading Good Intentions, Real Barriers: Investigating Accessibility in XR […]

IEEEVR 2025 – XRAccessibility Workshop

ISMAR 2024 – IDEATExR Workshop

CFP: Fourth Workshop on Inclusion, Diversity, Equity, Accessibility, Transparency and Ethics in XR (IDEATExR)

Scene Weaving: An Interactional Metaphor to Enable Blind Users’ Experience in 3D Virtual Environments

May 2nd, 2024

Microsoft researchers will showcase Scene Weaving, a new approach to visual accessibility for 3D spaces.

AI Scene Descriptions

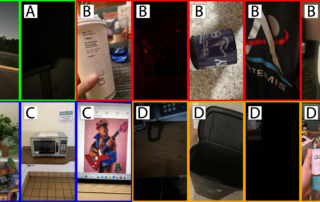

Authors: Ricky Gonzalez, Jazmin Collins, Shiri Azenkot, Cindy Bennet

Cornell Tech PhD Students Ricky Gonzalez and Jazmin Collins, co-founder of XR Access Shiri Azenkot, and Accessibility Researcher at Google Cindy Bennet are investigating the potential of AI to describe scenes to blind and low vision (BLV) people. […]

IEEE VR 2024 – IDEATExR Workshop

XR Access will be cosponsoring a workshop on Inclusion, Diversity, Equity, Accessibility, Transparency and Ethics in XR (IDEATExR) in conjunction with the IEEE VR 2024 conference at Orlando, Florida USA, from March 16-21, 2024.

Keynote Speaker: Dr. […]

Sighted Guides to Enhance Accessibility for Blind and Low Vision People in VR

Authors: Jazmin Collins*, Crescentia Jung*, Yeonju Jang (* indicates co-first authorship and equal contribution).

Our first project explored using the sighted guide technique in VR to support accessibility for blind and low vision people. We created a prototype with a guidance system and conducted a study with […]

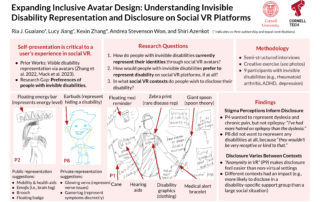

Exploring Self-Presentation for People with Invisible Disabilities in VR

Authors: Ria J. Gualano*, Lucy Jiang*, Kexin Zhang*, Andrea Stevenson Won, and Shiri Azenkot (* indicates co-first authorship and equal contributions).

Through this project, we aim to fill a gap in current accessibility research by focusing on the experiences of people with invisible disabilities (e.g., chronic […]

Making 360° Videos Accessible to Blind and Low Vision People

Authors: Lucy Jiang, Mahika Phutane, Shiri Azenkot.

While traditional videos are typically made accessible with audio description (AD), we lack understanding on how to make 360° videos accessible while preserving their immersive nature. Through individual interviews and collaborative design workshops, we explore ways to improve 360° video […]

Making Nonverbal Cues Accessible to Facilitate Interpersonal Interactions in VR

Authors: Crescentia Jung*, Jazmin Collins*, Yeonju Jang, Jonathan Segal (* indicates co-first authorship and equal contribution).

This project explores how to make nonverbal cues accessible in VR for blind and low vision people. We first explored how to make gaze accessible in VR with a blind co-designer […]

Guide to Distributing Tools for VR Accessibility Accommodations

Authors: Jonathan Segal, Samuel Rodriquez, Akshaya Raghavan, Heysil Baez, Shiri Azenkot, Andrea Stevenson Won.

The distribution of tools on improving the accessibility of VR is currently fragmented. Companies usually share these frameworks either on their website and academics if they do publish these tools usually do so […]

Virtual Showdown

VR games are becoming common, but people with visual impairments are frequently left out of the fun. Some prior work has explored including audio cues as an accompaniment to visual VR experiences, while developers of accessible games have created audio-only versions of popular games for people with visual impairments. However, […]

AR Navigation

Navigating stairs can be a dangerous mobility challenge for people with low vision. Inadequate handrails, poorly marked steps, and other obstacles can reduce mobility and lead to accidents. While past research has proposed audio stair-navigation aids for blind people, no research on people with low vision has yet addressed this […]

Interactive 3D Models

Cornell Tech PhD student Lei Shi, faculty member Shiri Azenkot, and their collaborators are studying how to design educational 3D models for students with visual impairments. The researchers interviewed teachers of the visually impaired about the needs of their students, and demonstrated previously designed 3D-printed […]